Unraveling the Intricacies of AI: A Comparative Study of img2prompt and blip-2 Models

Understanding the practical uses, strengths, and distinct characteristics of two top-tier image-to-text models.

Whether we're trying to auto-generate meaningful textual prompts from visual content or seeking answers to specific queries about images, AI models have become our go-to solution. Two such models that have captured significant attention recently are the img2prompt and blip-2 models.

Subscribe or follow me on Twitter for more content like this!

For instance, consider the task of a content creator who routinely has to devise catchy prompts based on various images. Using img2prompt, they can effortlessly transform images into engaging text prompts, streamlining their creative process. Alternatively, imagine a customer support scenario where the representative needs to answer queries about product images, blip-2 can be a game-changer, generating answers swiftly and accurately.

In this blog post, we'll be comparing the img2prompt and blip-2 models, both of which are among the top-ranked on AIModels.fyi. The img2prompt holds the 24th rank, while blip-2 stands at 14th. We'll also learn how to use AIModels.fyi to discover similar models and compare them. So, let's dive right in!

About the img2prompt Model

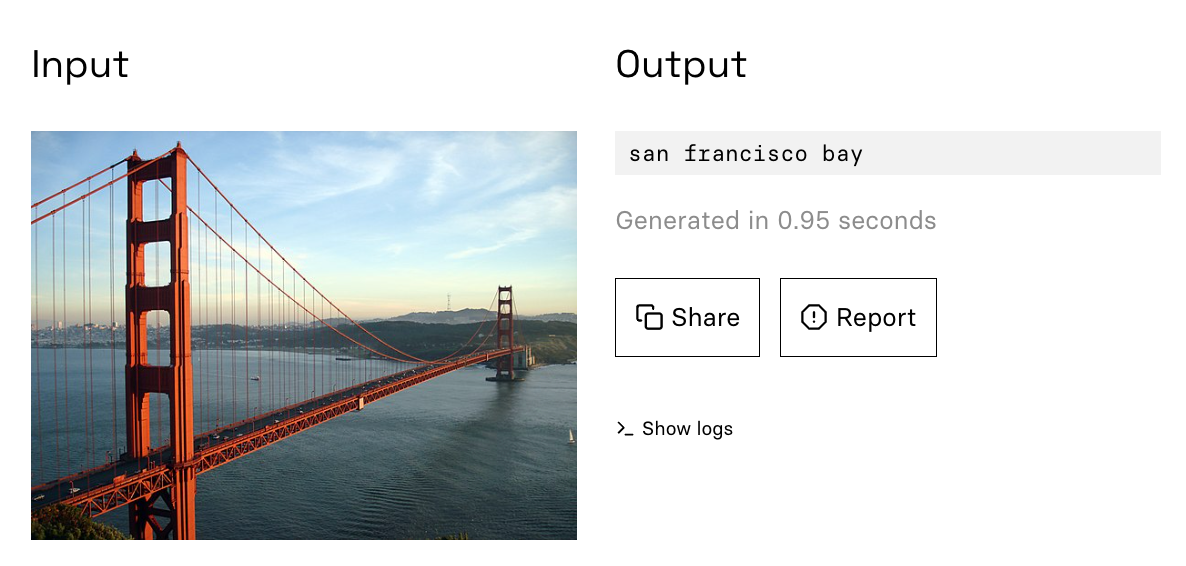

The img2prompt model by methexis-inc allows users to obtain an approximate text prompt, designed with a stylistic flair, that matches an image. Optimized for stable-diffusion (clip ViT-L/14), this model offers an exciting approach for those who wish to extract textual insights from images.

Essentially, the img2prompt model acts as a translator, converting visual data into text. But it doesn't merely describe the image; it goes a step further and crafts a text prompt that maintains a stylistic quality, making it particularly appealing for content creators, marketers, and writers.

The img2prompt model's details and API specifications can be found on its dedicated model page. The model uses the clip interrogator under the hood, about which you can read more in this article.

Understanding the Inputs and Outputs of img2prompt

Understanding how a model works involves familiarizing ourselves with its inputs and outputs. For img2prompt, the process is straightforward.

Inputs

The img2prompt model requires a single input: an image file. The image serves as the source for generating the text prompt.

Outputs

The output of the img2prompt model is a text string, representing the stylistically crafted prompt that corresponds to the input image.

About the blip-2 Model

The blip-2 model developed by salesforce, in contrast, excels at answering questions about images. By providing an image and a question related to it, you can get accurate, relevant answers.

Essentially, blip-2 acts as an intelligent image consultant. Whether you need to identify the elements in a picture or you're seeking a deeper interpretation of the visual content, blip-2 can deliver meaningful responses. The model page contains all the details and API specifications for blip-2.

The blip-2 model achieves its impressive performance thanks to the methodologies described in the BLIP-2 paper. For more information, refer to the model's beginner-friendly guide on AIModels.fyi's notes section.

Understanding the Inputs and Outputs of blip-2

Inputs

Blip-2 accepts several inputs, including:

- An image file for query or captioning

- A boolean parameter that determines if you want to generate image captions instead of asking questions

- A string representing the question to ask about the image

- An optional string for previous questions and answers as context

Outputs

The output of the blip-2 model is a string containing the answer to the asked question. If the captioning parameter is set to true, it generates a descriptive caption for the provided image. The output also includes intermediate results such as attention maps and image embeddings, offering a detailed understanding of the model's functioning.

Comparing the img2prompt and blip-2 Models

Now that we have a basic understanding of both the img2prompt and blip-2 models, let's compare them based on their primary applications, strengths, and areas of improvements.

Primary Applications

img2prompt: This model is suitable for anyone seeking to convert visual data into stylistic text prompts. Its applications are widespread, ranging from helping content creators, copywriters, marketers to fashioning catchy social media posts.

blip-2: blip-2 is designed to answer questions about images. It is an excellent tool for tasks involving image analysis, such as identifying elements in a picture, seeking a deeper interpretation of visual content, or generating captions for images. Its utility extends to customer support, market research, education, and much more.

Strengths

img2prompt: img2prompt's strength lies in its ability to generate creative, stylistic text prompts based on the provided image. This ability makes it highly valuable for content creation and marketing where engaging, nuanced language is vital.

blip-2: blip-2 excels in offering accurate and relevant answers to questions about images. This detailed image analysis makes it particularly beneficial for fields where precision and in-depth understanding are required. It's also beneficial for generating descriptive captions for images.

Weaknesses

img2prompt: While img2prompt performs well in producing creative prompts, there may be instances where the generated prompts might not perfectly capture the essence of the image. Improving the model's semantic understanding of the images could further enhance its performance.

blip-2: Despite the impressive performance of blip-2 in answering questions about images, it might struggle with complex queries that involve abstract concepts or require deeper interpretation. Enhancing the model's capability to interpret and reason could improve its performance.

Discovering Similar Models on AIModels.fyi

If you're looking for models similar to img2prompt or blip-2, AIModels.fyi provides an easy way to find them. On the individual model page, scroll down to the "Similar Models" section. Here, you'll find a list of AI models that offer similar functionalities or utilize similar methodologies.

For example, on the img2prompt model page, you might find models related to text generation from images. On the blip-2 model page, you might find models related to image captioning or question answering.

AIModels.fyi also offers a comprehensive comparison feature that allows you to compare models based on a variety of metrics, including performance, applications, strengths, and weaknesses.

Conclusion

AI models like img2prompt and blip-2 have revolutionized the way we extract information from images. Whether you need to generate creative text prompts from images or answer questions about them, these models offer remarkable solutions.

Though they may have different primary applications and strengths, both models play crucial roles in the broader field of AI-driven image analysis. As technology continues to evolve, these models are poised to become even more powerful, providing us with more precise and meaningful ways to understand and interact with visual content.

Subscribe or follow me on Twitter for more content like this!

Comments ()