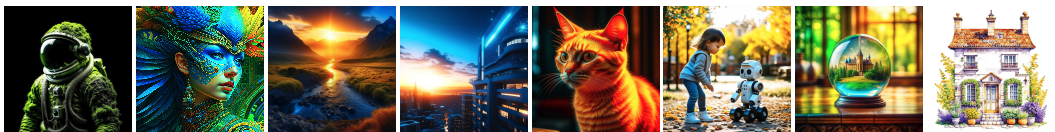

Meet Kandinsky 2.2: "It's Like if Midjourney Had an API"

Kandinsky v2.2 is a Midjourney alternative that produces high-quality images from text through a javascript API.

AI-powered image generation models are revolutionizing the creative landscape. The Midjourney platform has been a key player in this innovative field with its text-driven image creation. However, its Discord-based interface presented some limitations for professional use.

Let's take a look instead at a new AI model called Kandinsky 2.2, a more builder-friendly text-to-image model available via a versatile API. Unlike Midjourney, which operates through Discord, Kandinsky enables developers to integrate AI image generation into various programming languages such as Python, Node.js, and cURLs. This means that with just a few lines of code, Kandinsky can automate the process of image generation, making it a more efficient tool for creative professionals. And with the new v2.2 release Kandinsky's image quality has never been higher.

Subscribe or follow me on Twitter for more content like this!

Kandinsky 2.2 brings a new level of accessibility and flexibility to AI image generation. It seamlessly integrates with multiple programming languages and tools, offering a level of flexibility that surpasses the Midjourney platform. Moreover, Kandinsky's advanced diffusion techniques result in impressively photorealistic images. Its API-first approach makes it easier for professionals to incorporate AI-powered visualization into their existing tech stack.

In this guide, we'll explore Kandinsky's potential for scalability, automation, and integration, and discuss how it can contribute to the future of creativity. Join us as we delve into the tools and techniques needed to incorporate stunning AI art into your products using this advanced AI assistant.

Key Benefits of Kandinsky 2.2

- Open source - Kandinsky is fully open source. Use the code directly or access it via Replicate's flexible API.

- API access - Integrate Kandinsky into your workflows in Python, Node.js, cURLs, and more through the Replicate API.

- Automation - Tweak images programmatically by modifying text prompts in code for rapid iteration.

- Scalability - Generate thousands of images with simple API calls. Create storyboards and visualize concepts at scale.

- Custom integration - Incorporate Kandinsky into your own tools and products thanks to its API-first design.

- ControlNet - Get granular control over image properties like lighting and angle through text prompts.

- Multilingual - Understands prompts in English, Chinese, Japanese, Korean, French and more.

- High resolution - Crisp, detailed 1024x1024 images ready for any use case.

- Photorealism - State-of-the-art diffusion techniques produce stunning, realistic images on par with Midjourney.

How does Kandinsky work?

Kandinsky 2.2 is a text-to-image diffusion model that generates images from text prompts. It consists of several key components:

- Text Encoder: The text prompt is passed through an XLM-Roberta-Large-Vit-L-14 encoder to extract semantic features and encode the text into a latent space. This produces a text embedding vector.

- Image Encoder: A pretrained CLIP-ViT-G model encodes images into the same latent space as the text embeddings. This allows matching between text and image representations.

- Diffusion Prior: A transformer maps between the text embedding latent space and the image embedding latent space. This establishes a diffusion prior that links text and images probabilistically.

- UNet: A 1.22B parameter Latent Diffusion UNet serves as the backbone network. It takes an image embedding as input and outputs image samples from noisy to clean through iterative denoising.

- ControlNet: An additional neural network that conditions image generation on auxiliary inputs like depth maps. This enables controllable image synthesis.

- MoVQ Encoder/Decoder: A discrete VAE that compresses image embeddings as discrete latent codes for more efficient sampling.

During training, text-image pairs are encoded to linked embeddings. The diffusion UNet is trained to invert these embeddings back to images through denoising.

For inference, the text is encoded to an embedding, mapped through the diffusion prior to an image embedding, compressed by MoVQ, and inverted by the UNet to generate images iteratively. The additional ControlNet allows controlling attributes like depth.

Key improvements over prior versions of Kandinsky

The primary enhancements in Kandinsky 2.2 include:

- New Image Encoder - CLIP-ViT-G: One of the key upgrades is the integration of the CLIP-ViT-G image encoder. This upgrade significantly bolsters the model's ability to generate aesthetically pleasing images. By utilizing a more powerful image encoder, Kandinsky 2.2 can better interpret text descriptions and translate them into visually captivating images.

- ControlNet Support: Kandinsky 2.2 introduces the ControlNet mechanism, a feature that allows for precise control over the image generation process. This addition enhances the accuracy and appeal of the generated outputs. With ControlNet, the model gains the capability to manipulate images based on text guidance, opening up new avenues for creative exploration.

How can I use Kandinsky to create images?

Ready to start creating with this powerful AI model? Here's a step-by-step guide to using the Replicate API to interact with Kandinsky 2.2. At a high level, you'll need to:

- Authenticate - Get your Replicate API key and authenticate in your environment.

- Send a prompt - Pass your textual description in the

promptparameter. You can specify it in multiple languages. - Customize parameters - Tweak image dimensions, number of outputs, etc. as needed. Refer to the model spec for more details, or read on.

- Process the response - Kandinsky 2.2 outputs a URL to the generated image. Download this image for use in your project.

For convenience, you may also want to try out this live demo to get a feel for the model's capabilities before working on your code.

Step-by-Step Guide to Using Kandinsky 2.2 via the Replicate API

In this example, we'll use Node to work with the model. So you'll need to first install the Node.js client.

npm install replicate

Then, copy your API token and set it as an environment variable:

export REPLICATE_API_TOKEN=r8_*************************************

Next, run the model using the Node.js script:

import Replicate from "replicate";

const replicate = new Replicate({

auth: process.env.REPLICATE_API_TOKEN,

});

const output = await replicate.run(

"ai-forever/kandinsky-2.2:ea1addaab376f4dc227f5368bbd8eff901820fd1cc14ed8cad63b29249e9d463",

{

input: {

prompt: "A moss covered astronaut with a black background"

}

}

);

You can also set up a webhook for predictions to receive updates when the process is complete.

const prediction = await replicate.predictions.create({

version: "ea1addaab376f4dc227f5368bbd8eff901820fd1cc14ed8cad63b29249e9d463",

input: {

prompt: "A moss covered astronaut with a black background"

},

webhook: "https://example.com/your-webhook",

webhook_events_filter: ["completed"]

});As you work this code into your application, you'll want to experiment with the model's parameters. Let's take a look at Kandinsky's inputs and outputs.

a red cat photo, 8kKandinsky 2.2's Inputs and Outputs

The text prompt is the core input that guides Kandinsky's image generation. By tweaking your prompt, you can shape the output.

- Prompt - The textual description, like "A astronaut playing chess on Mars." This is required.

- Negative Prompt - Specifies elements to exclude, like "no space helmet." Optional.

- Width and Height - Image dimensions in pixels, from 384 to 2048. Default is 512 x 512.

- Num Inference Steps - Number of denoising steps during diffusion, higher is slower but potentially higher quality. Default is 75.

- Num Outputs - Number of images to generate per prompt, default is 1.

- Seed - Integer seed for randomization. Leave blank for random.

Combining creative prompts with these tuning parameters allows you to dial in your perfect image.

Kandinsky Model Outputs

Kandinsky outputs one or more image URLs based on your inputs. The URLs point to 1024x1024 JPG images hosted on the backend. You can download these images to use in your creative projects. The number of outputs depends on the "num_outputs" parameter. The output format looks like this:

{

"type": "array",

"items": {

"type": "string",

"format": "uri"

},

"title": "Output"

}By generating variations, you can pick the best result or find inspiring directions.

What kinds of apps or products can I build with Kandinsky?

The ability to turn text into images is a remarkable innovation, and Kandinsky 2.2 is at the forefront of this technology. Let's explore some practical ways this model could be used.

In design, for instance, the rapid conversion of textual ideas into visual concepts could significantly streamline the creative process. Rather than relying on lengthy discussions and manual sketches, designers could use Kandinsky to instantly visualize their ideas, speeding up client approvals and revisions.

In education, the transformation of complex textual descriptions into visual diagrams could make learning more engaging and accessible. Teachers could illustrate challenging concepts on the fly, enhancing students' comprehension and interest in subjects like biology or physics.

watercolor mixed media masterpiece beautiful white cozy house with chimneys, a purple door, richly decorated with lupine, flower pots overgrown with moss, Provence, gold accents, shabby chic style, isolated on white, extremely photorealistic details, realistic high detail, high resolutionThe world of film and web design could also benefit from Kandinsky 2.2. By turning written scripts and concepts into visuals, directors, and designers can preview their work in real-time. This immediate visualization could simplify the planning stage and foster collaboration between team members.

Moreover, Kandinsky's ability to produce high-quality images might open doors for new forms of artistic expression and professional applications. From digital art galleries to print media, the potential uses are broad and exciting.

But let's not lose sight of the practical limitations. While the concept is promising, real-world integration will face challenges, and the quality of generated images may vary or require human oversight. Like any emerging technology, Kandinsky 2.2 will likely need refinement and adaptation to meet diverse needs.

Taking it Further - Discover Similar Models with AIModels.fyi

AIModels.fyi is a valuable resource for discovering AI models tailored to specific creative needs. You can explore various types of models, compare them, and even sort by price. It's a free platform that offers digest emails to keep you informed about new models.

To find similar models to Kandinsky-2.2:

- Visit AIModels.fyi.

- Use the search bar to enter a description of your use case. For example "realistic portraits" or "high-quality text to image generator"

- View the model cards for each model and choose the best one for your use case

- Check out the model details page for each model and compare to find your favorites.

Conclusion

In this guide, we've explored the innovative capabilities of Kandinsky-2.2, a multilingual text-to-image latent diffusion model. From understanding its technical implementation to utilizing it through step-by-step instructions, you're now equipped to leverage the power of AI in your creative endeavors. Additionally, AIModels.fyi opens doors to a world of possibilities by helping you discover and compare similar models. Embrace the potential of AI-driven content creation and subscribe for more tutorials, updates, and inspiration on AIModels.fyi. Happy exploring and creating!

Subscribe or follow me on Twitter for more content like this!

Further Reading: Exploring AI Models and Applications

For those intrigued by the capabilities of AI models and their diverse applications, here are some relevant articles that delve into various aspects of AI-powered content generation and manipulation:

- AI Logo Generator: Erlich: Discover how the AI Logo Generator Erlich leverages AI to create unique and visually appealing logos, expanding your understanding of AI's creative potential.

- Best Upscalers: Uncover a comprehensive overview of the best upscaling AI models, providing insights into enhancing image resolution and quality.

- How to Upscale in Midjourney: A Step-by-Step Guide: Explore a detailed guide on how to effectively upscale images using the Midjourney AI model, enriching your knowledge of image enhancement techniques.

- Say Goodbye to Image Noise: How to Enhance Old Images with ScuNet GAN: Dive into the realm of image denoising and restoration using ScuNet GAN, gaining insights into preserving image quality over time.

- Breathe New Life into Old Photos with AI: A Beginner's Guide to Gfpgan: Learn how Gfpgan AI model breathes new life into old photos, providing you with a beginner's guide to revitalizing cherished memories.

- Comparing Gfpgan and Codeformer: A Deep Dive into AI Face Restoration: Gain insights into the nuances of AI-based face restoration by comparing Gfpgan and Codeformer models.

- NightmareAI: AI Models at Their Best: See the best models from the Nightmare AI team.

- ESRGAN vs. Real-ESRGAN: From Theoretical to Real-World Super Resolution with AI: Understand the nuances between ESRGAN and Real-ESRGAN AI models, shedding light on super-resolution techniques.

- Real-ESRGAN vs. SwinIR: AI Models for Restoration and Upscaling: Compare Real-ESRGAN and SwinIR models, gaining insights into their effectiveness in image restoration and upscaling.

Comments ()