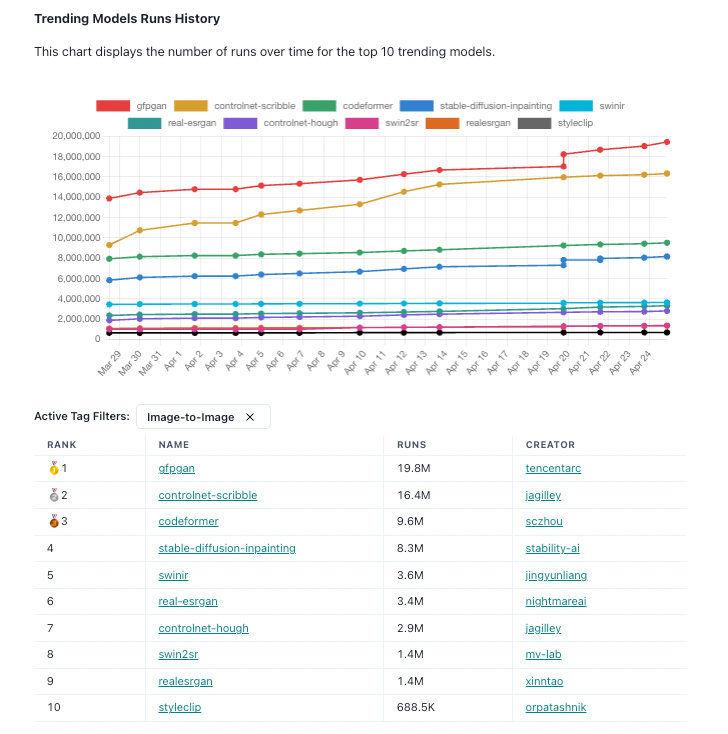

The Best AI Image-to-Image Models on Replicate (April 2023)

The top AI Image-to-Image models, using April 2023 data from Replicate Codex

If you're looking for the best AI image-to-image models, you've come to the right place. Replicate, a platform that allows users to share and reproduce machine learning models, has compiled a list of the top 10 trending models as of April 2023. Here's what you need to know about each model.

| Rank | Model Name | Runs | Creator | Model Detail Page |

|---|---|---|---|---|

| 1 | gfpgan | 19.8M | tencentarc | https://www.replicatecodex.com/models/279 |

| 2 | controlnet-scribble | 16.4M | jagilley | https://www.replicatecodex.com/models/54 |

| 3 | codeformer | 9.6M | sczhou | https://www.replicatecodex.com/models/284 |

| 4 | stable-diffusion-inpainting | 8.3M | stability-ai | https://www.replicatecodex.com/models/92 |

| 5 | swinir | 3.6M | jingyunliang | https://www.replicatecodex.com/models/258 |

| 6 | real-esrgan | 3.4M | nightmareai | https://www.replicatecodex.com/models/355 |

| 7 | controlnet-hough | 2.9M | jagilley | https://www.replicatecodex.com/models/47 |

| 8 | swin2sr | 1.4M | mv-lab | https://www.replicatecodex.com/models/200 |

| 9 | realesrgan | 1.4M | xinntao | https://www.replicatecodex.com/models/286 |

| 10 | styleclip | 688.5K | orpatashnik | https://www.replicatecodex.com/models/218 |

🥇1. Gfpgan

With 19.8M runs, gfpgan is the most popular image-to-image model on Replicate. Developed by TencentARC, gfpgan uses a generative adversarial network (GAN) to enhance low-resolution images. According to its model detail page, gfpgan has shown impressive results in upscaling images while preserving details and textures.

🥈2. Controlnet-scribble

The second most popular model on Replicate is controlnet-scribble, with 16.4M runs. Developed by jagilley, this model uses a deep neural network to generate images from rough sketches or "scribbles." Its model detail page shows that controlnet-scribble has been used for a variety of applications, from landscape generation to character design.

🥉3. Codeformer

Codeformer, with 9.6M runs, is the third most popular image-to-image model on Replicate. Developed by sczhou, this model uses transformers to generate images from textual descriptions. Its model detail page shows that codeformer has been used to generate realistic images of birds, flowers, and other objects.

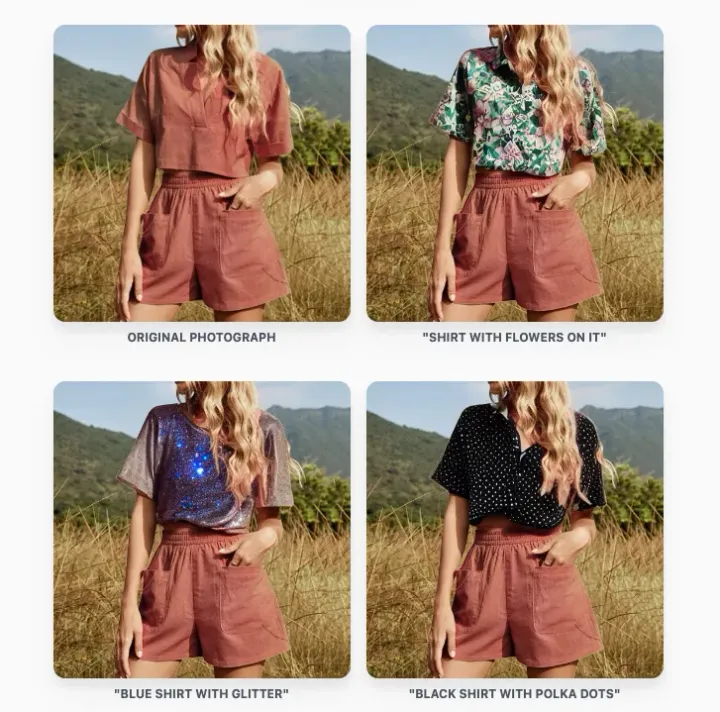

4. Stable-diffusion-inpainting

Stable-diffusion-inpainting, with 8.3M runs, is a model developed by stability-ai for image inpainting. Its model detail page explains that this model uses a diffusion process to fill in missing pixels in images. The results are impressive, with stable-diffusion-inpainting able to restore images with missing or corrupted parts.

5. Swinir

Swinir, with 3.6M runs, is a model developed by jingyunliang for image restoration. Its model detail page shows that this model uses a novel architecture called Swin Transformer to improve image quality by removing noise, blur, and other distortions.

6. Real-esrgan

Real-esrgan, with 3.4M runs, is an image super-resolution model developed by nightmareai. According to its model detail page, real-esrgan uses a generative adversarial network to upscale images while preserving details and textures. The results are impressive, with real-esrgan able to enhance the quality of images without introducing artifacts or blurriness.

7. Controlnet-hough

Controlnet-hough, with 2.9M runs, is another image generation model developed by jagilley. Its model detail page explains that this model uses a deep neural network to generate images from Hough parameters, a mathematical technique used for detecting shapes in images.

8. Swin2sr

Swin2sr, with 1.4M runs, is another image super-resolution model developed by mv-lab. Its model detail page explains that this model uses the Swin Transformer architecture to enhance the resolution of images, while maintaining their details and textures. Swin2sr has been used for various applications, including medical imaging and satellite imagery.

9. Realesrgan

Realesrgan, with 1.4M runs, is another image super-resolution model, developed by xinntao. According to its model detail page, realesrgan uses a combination of generative adversarial networks and perceptual loss functions to enhance the resolution of images. This model has shown impressive results in upscaling images with a high degree of accuracy and fidelity.

10. Styleclip

Finally, Styleclip, with 688.5K runs, is a model developed by orpatashnik for image style transfer. Its model detail page explains that this model uses the CLIP language model to generate images in a particular style, based on textual prompts. Styleclip has been used for various applications, including art and design, and has shown impressive results in generating realistic images in a particular style.

In conclusion, the above models represent some of the best AI image-to-image models available on Replicate as of April 2023. Whether you're looking to enhance image quality, restore missing pixels, or generate images from scratch, these models offer impressive results and a wide range of applications. So why not try them out and see how they can improve your image processing workflows?

Comments ()