Google DeepMind says AI has discovered new solutions to 2 famous math problems

This announcement is hot on the heels of another DeepMind paper that used AI to discover hundreds of new materials.

Artificial intelligence and machine learning show tremendous potential to advance scientific knowledge by automating discovery and problem-solving techniques. However, most current AI systems have limitations that prevent the independent generation of truly novel and verifiable factual discoveries. A new research paper by DeepMind (paper link) introduces an innovative new technique called FunSearch that addresses some of these challenges and may help realize AI's potential for driving progress in mathematics.

Subscribe or follow me on Twitter for more content like this!

The Challenge of AI-Driven Scientific Discovery

Large pre-trained language models have achieved superhuman performance on many language-based tasks. However, as these systems have not experienced the world in the way humans have, they lack a comprehensive understanding of physics and cause-and-effect relationships. For this reason, their ability to autonomously generate new factual scientific insights is limited.

Prior work has shown that language models can "hallucinate" incorrect information when prompted to speculate or hypothesize without constraints. While creativity is useful, unfettered generation of inaccurate claims would not constitute valid scientific discovery. For an AI system to make a legitimate contribution, it must present solutions that are objectively verifiable as true through empirical testing or logical proof.

The research problem FunSearch aims to address is how to harness the generative capabilities of large language models while avoiding incorrect or unverifiable ideas. Directly querying models to provide hypothesized discoveries risks hallucination and leaves subjective human judgement as the sole arbiter of what is deemed factual. A more rigorous approach is needed to systematically develop, refine, and validate potential discoveries in silico.

The FunSearch Methodology

To navigate this challenge, FunSearch introduces a novel methodology for evolutionary AI-driven scientific discovery. In fact, I'd say they actually combine two interesting techniques.

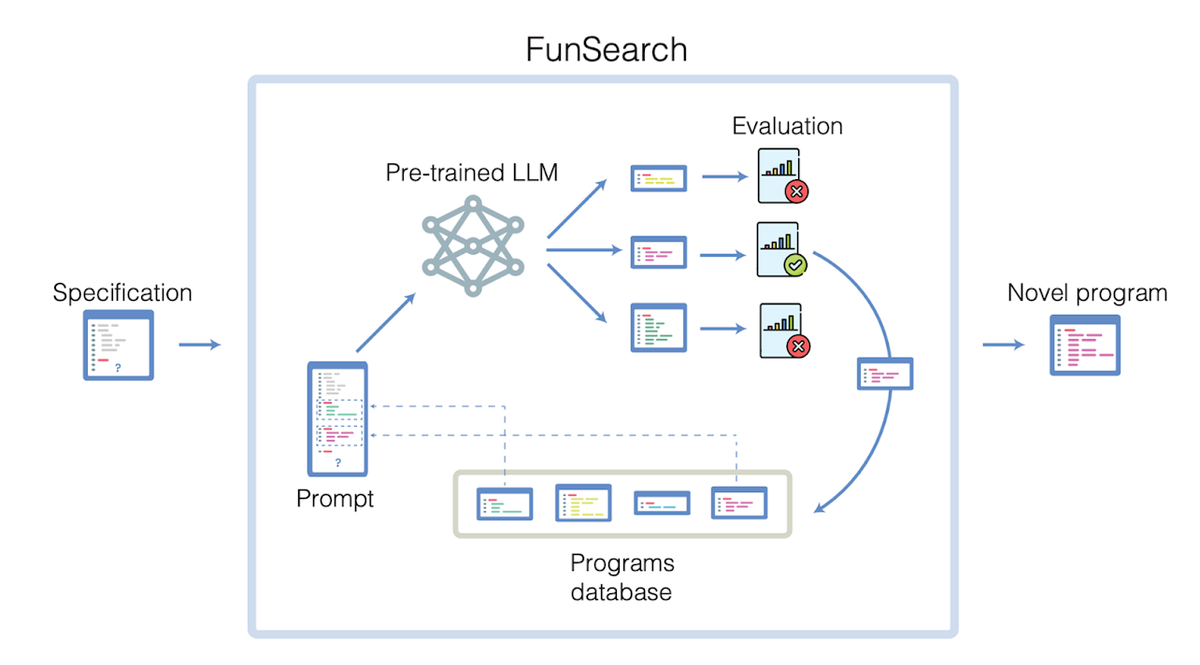

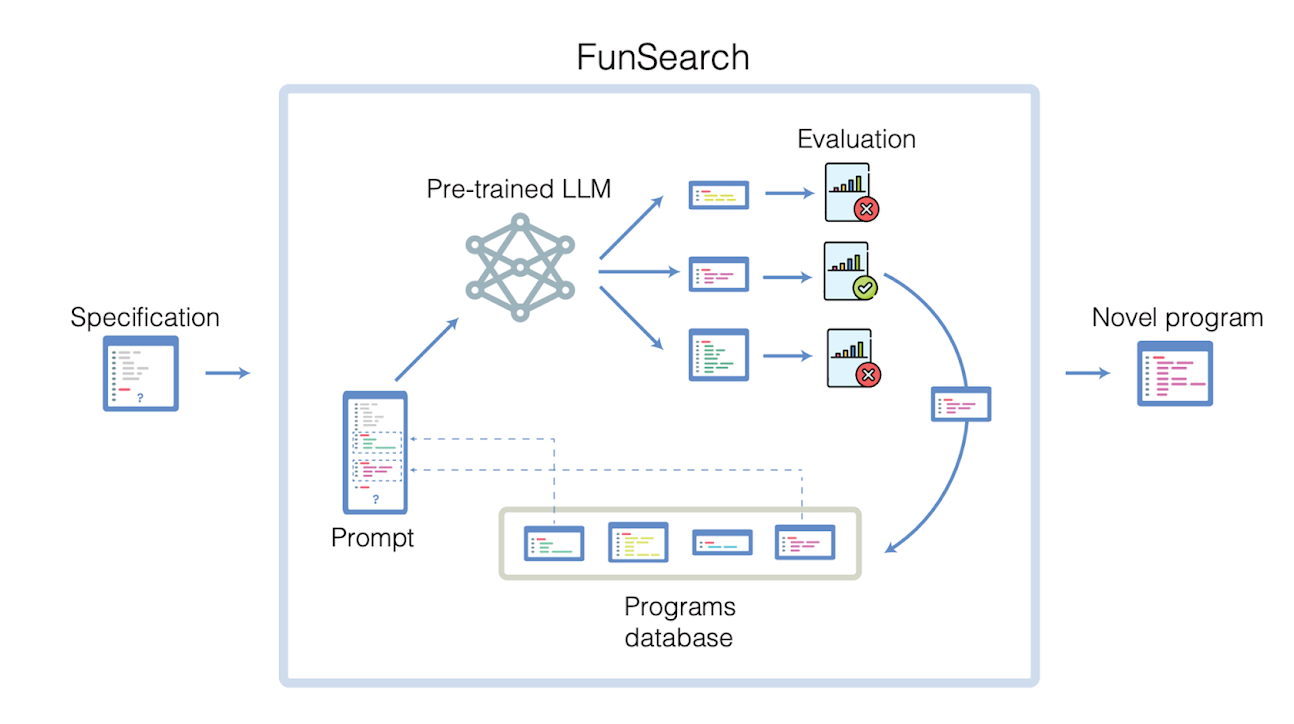

At its core, FunSearch pairs a pre-trained large language model with an automated "evaluator" component. The language model's role is to creatively build upon existing solutions to a problem by generating new proposed programmatic solutions expressed as computer code. Interesting idea number one, then, is treating a math problem as a coding problem.

The second interesting technique is the evaluator. The evaluator component runs objective tests on the programs to assess their validity and quality according to the problem's constraints without human oversight. This evaluator acts similar to other agent-based models by working as a critic or brake on hallucinations.

Through iterative exchanges between the generator and evaluator components, initial seed solutions can gradually evolve into more refined and higher-performing discoveries. And by expressing ideas as executable programs, FunSearch ensures proposals can be automatically investigated and verified rather than relying solely on descriptive speculation.

This evolutionary approach promotes interpretable solutions that demonstrate their validity rather than opaque claims. It allows the system to systematically develop discoveries in a manner analogous to the scientific method, guided by empirical results rather than subjective impressions.

Note: I strongly recommend you check out this article on another DeepMind project: DeepMind uses AI to discover 2.2 million new materials – equivalent to nearly 800 years’ worth of knowledge.

Application to Open Mathematical Problems

To evaluate FunSearch's ability to drive genuine discovery, the researchers applied it to two long-standing open problems: the "cap set problem" in extremal combinatorics and the "bin packing problem" in operational research.

For the cap set problem, FunSearch discovered arrangements of points that exceeded the largest known constructions, advancing the state-of-the-art by the largest amount in 20 years. It also outperformed specialized solvers on scaled-up problem instances that were too computationally intensive for those methods. From the authors:

We first address the cap set problem, an open challenge, which has vexed mathematicians in multiple research areas for decades. Renowned mathematician Terence Tao once described it as his favorite open question. We collaborated with Jordan Ellenberg, a professor of mathematics at the University of Wisconsin–Madison, and author of an important breakthrough on the cap set problem.

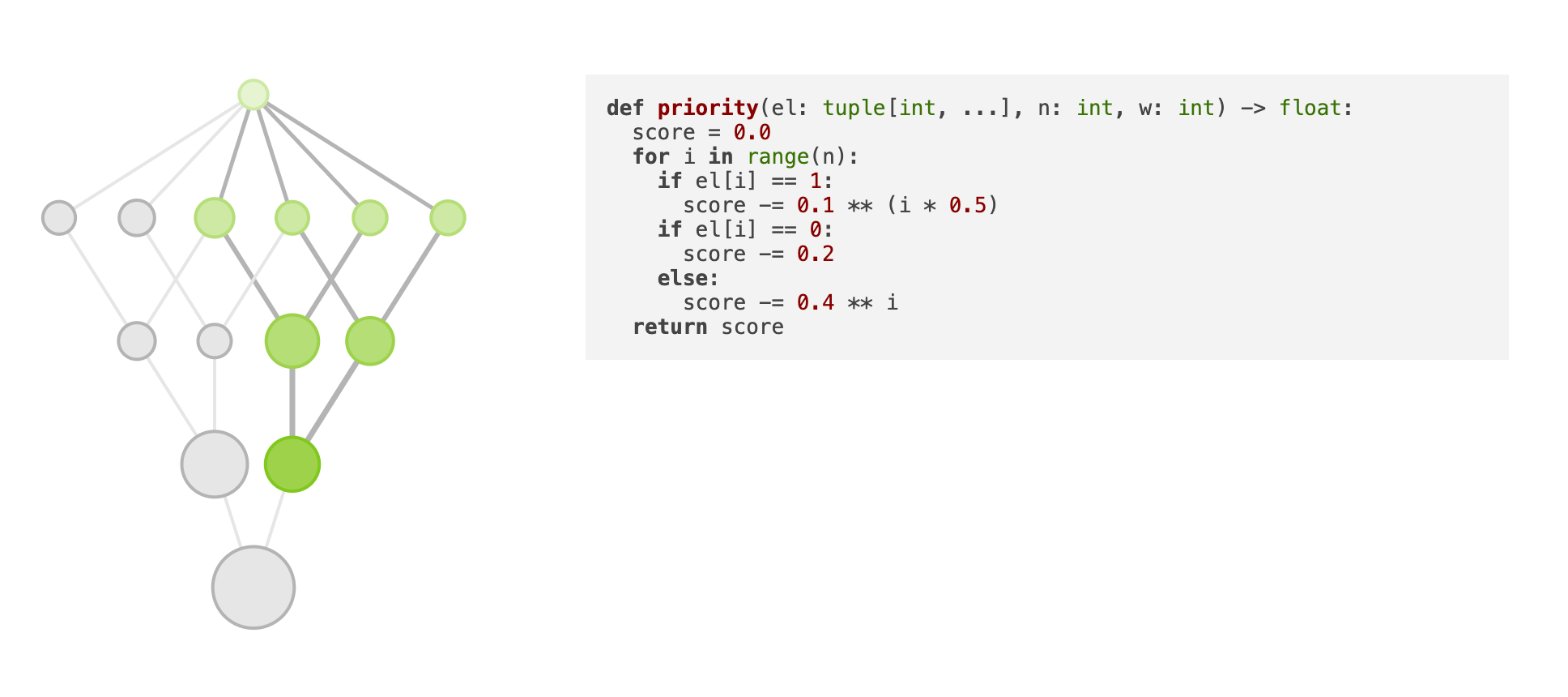

The problem consists of finding the largest set of points (called a cap set) in a high-dimensional grid, where no three points lie on a line. This problem is important because it serves as a model for other problems in extremal combinatorics - the study of how large or small a collection of numbers, graphs or other objects could be. Brute-force computing approaches to this problem don’t work – the number of possibilities to consider quickly becomes greater than the number of atoms in the universe.

FunSearch generated solutions - in the form of programs - that in some settings discovered the largest cap sets ever found. This represents the largest increase in the size of cap sets in the past 20 years. Moreover, FunSearch outperformed state-of-the-art computational solvers, as this problem scales well beyond their current capabilities.

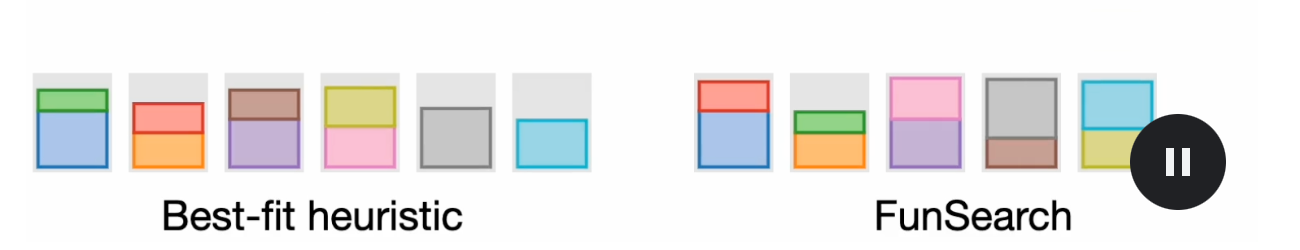

For the bin packing problem, which seeks the most space-efficient algorithm for variable-size item allocation, FunSearch autonomously developed a tailored packing heuristic. Through empirical tests, it was shown to use significantly fewer bins than established techniques for given assignment scenarios.

Encouraged by our success with the theoretical cap set problem, we decided to explore the flexibility of FunSearch by applying it to an important practical challenge in computer science. The “bin packing” problem looks at how to pack items of different sizes into the smallest number of bins. It sits at the core of many real-world problems, from loading containers with items to allocating compute jobs in data centers to minimize costs.

The online bin-packing problem is typically addressed using algorithmic rules-of-thumb (heuristics) based on human experience. But finding a set of rules for each specific situation - with differing sizes, timing, or capacity – can be challenging. Despite being very different from the cap set problem, setting up FunSearch for this problem was easy. FunSearch delivered an automatically tailored program (adapting to the specifics of the data) that outperformed established heuristics – using fewer bins to pack the same number of items.

Hard combinatorial problems like online bin packing can be tackled using other AI approaches, such as neural networks and reinforcement learning. Such approaches have proven to be effective too, but may also require significant resources to deploy. FunSearch, on the other hand, outputs code that can be easily inspected and deployed, meaning its solutions could potentially be slotted into a variety of real-world industrial systems to bring swift benefits.

In both cases, FunSearch produced programmatic expressions of its discoveries that could be systematically analyzed, built upon, and refined. The researchers were able to gain new combinatorial insights by studying FunSearch's evolving solution expressions over multiple runs.

Conclusion

The research presented in this paper demonstrates the potential of advanced AI techniques like FunSearch to systematically and autonomously drive progress across computational mathematics and other scientific domains. By pairing generative language models with automated evaluators, FunSearch can iteratively develop and refine executable programmatic expressions of discoveries without dependence on subjective human judgment alone.

Through initial applications to long-standing open problems, FunSearch has produced verifiably novel solutions and new combinatorial insights. Its ability to characterize breakthroughs through human-interpretable code not only verifies solutions but also enhances opportunities for scientific collaboration and further refinement of problems introduced to the system.

While preliminary, FunSearch and the discovery of millions of new potential materials in another DeepMind paper establish the feasibility of AI-powered discoveries that are objectively verifiable and contribute comprehensive new knowledge rather than discrete facts. As both language models and automated test infrastructure continue to advance, similar co-generative approaches may help tackle problems intractable through isolated human or machine efforts alone.

Looking ahead, opportunities exist to scale FunSearch across diverse scientific domains as suitable representation and evaluation schemes are developed. Generalizing the methodology beyond mathematical fields poses both technical challenges and opportunities for high-impact application. Continued work will also refine how discoveries can best be explained to and build upon by human experts.

Overall, FunSearch opens promising new avenues for leveraging state-of-the-art AI to systematically automate discovery processes and thereby accelerate progress against humanity's hardest challenges. With appropriate safeguards and in collaboration with researchers, generative AI shows the potential to revitalize scientific frontier expansion for many years to come.

Subscribe or follow me on Twitter for more content like this!

Comments ()