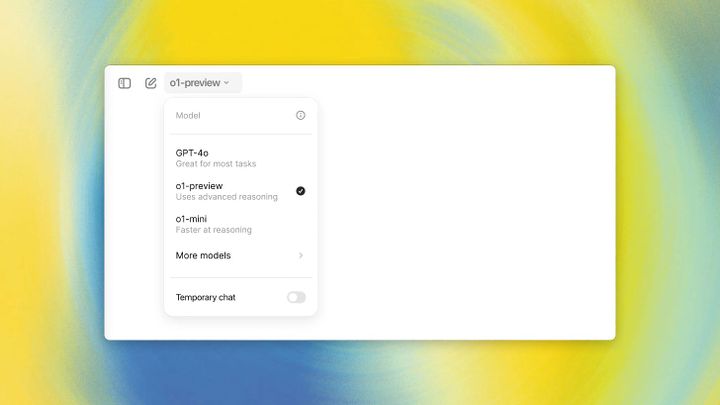

What to expect for next week's GPT4 launch

OpenAI and Bing's GPT4 is coming in March 2023. It's going to be "multimodal."

There's some pretty exciting news about the release of GPT-4, the latest AI language model from OpenAI. Microsoft Germany's CTO, Andreas Braun, confirmed that it's coming within the next week and that it's going to be "multimodal". Basically, that means that it will be able to work with different kinds of input, like video, images, and sound, as well as text.

This is a big deal because previous versions of GPT (like GPT-3) only worked with text. But the news reports we have don't give us a ton of specifics about what GPT-4 will actually be able to do, so we don't know for sure how it will all work.

One interesting tidbit is that Microsoft is also working on "confidence metrics" to make their AI more reliable. And, apparently, they released their own multimodal language model called Kosmos-1 earlier this month.

The multimodal thing is huge - if GPT4 can work really well with video, text, and images, then I think a lot of startups who currently function as wrappers for non-text models could be in the same amount of trouble as Jarvis/Conversion/Jasper was back with ChatGPT launched for free (I canceled my Jasper subscription immediately once it became clear I could use ChatGPT directly at no cost to do the same work).

One other thought: if GPT4 is as big of a leap as many are hoping, and if it arrives so soon after 3.5 and the ChatGPT interface, expect to see a big reinforcement of the AI escape velocity narrative we're already seeing on Twitter. If GPT4 turns out to just be an incremental improvement, you'll get the corresponding "overhyped" argument instead.

Good morning.

— Smoke-away (@SmokeAwayyy) March 11, 2023

1 day closer to AGI. pic.twitter.com/GwLetjnUxa

Would be pretty nice if AGI let us get to here!

So, there's a lot we still don't know about GPT-4 and how it will work, but it's definitely exciting to see what new possibilities it might open up for AI. It's also worth noting that the main source of news about this release seems to be an article from Microsoft Germany, and there's nothing on the OpenAI blog about it yet. So, we'll have to wait and see what happens!

Subscribe or follow me on Twitter for more content like this!

Comments ()